Educators in Medicine,

In this newsletter, we continue our journey through the fundamentals of AI, its applications in medicine, and its transformative role in faculty development and education. Let’s dive into learning.

Special Episode Alert!

We recently got this article published at the STFM PRIMER and I want to reflect on its implications with you.

The intersection of artificial intelligence and medical education has sparked a wave of innovation and curiosity, particularly in how it can transform the way physicians prepare for critical exams, such as the Family Medicine board exams. We explored the use of Large Language Models (LLMs) in this context. Below, we answer some essential questions that medical educators, faculty developers, and learners should consider when integrating LLMs into their board exam preparation strategies. This paper is for Family Medicine, but it can be applied across specialties.

Introduction

The study explores the potential of Large Language Models (LLMs) like ChatGPT in assisting physicians preparing for the Family Medicine (FM) board exams. With the growing integration of AI in various fields, including medicine, the research aims to assess whether these advanced models can be effective tools for exam preparation. The introduction highlights the increasing interest in AI's role in education, particularly in enhancing study practices and supporting medical professionals in maintaining and advancing their knowledge base.

Methods

The research involved a detailed evaluation of several LLMs by subjecting them to a series of Family Medicine board exam-style questions. The models were tested on their ability to understand and respond accurately to these questions, which ranged from straightforward knowledge-based queries to more complex, clinically-oriented scenarios. The performance of each model was then compared to the expected standard of proficiency required to pass the FM board exams. The study also involved analyzing the strengths and weaknesses of each LLM in terms of their applicability to medical education and their potential as reliable study aids for physicians. These questions were from the 2022 In Training Exam (ITE) and had the ABFM predictive chart to express likelihood of passing the true exam.

Some questions we want answered for readers

1. Which LLM Should I Use to Study for My FM Board Exam?

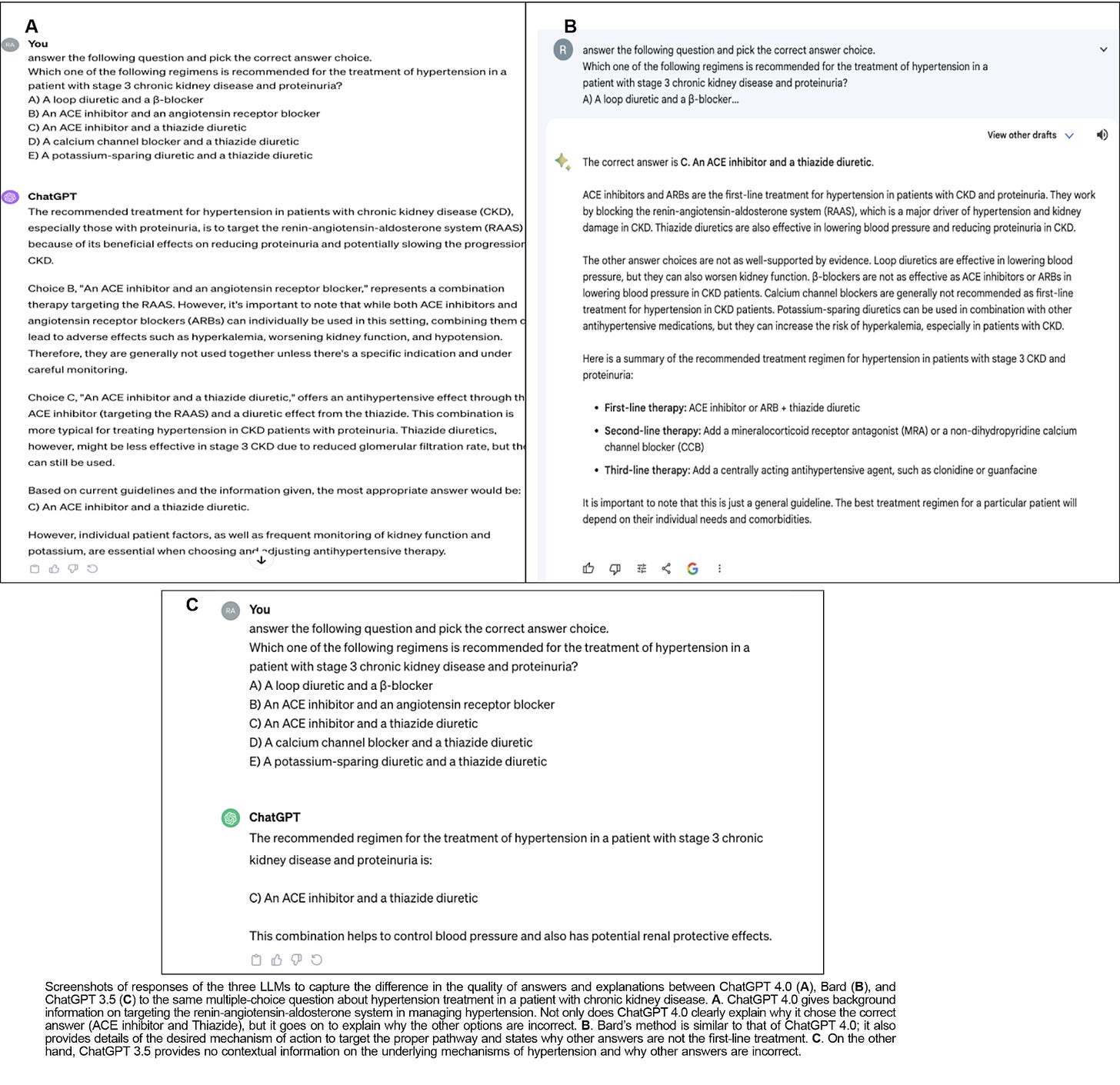

Our research evaluated several LLMs, including GPT-4, Google’s Bard (now Gemini), and others, assessing their ability to provide accurate and relevant medical information. The choice of LLM can significantly impact your study outcomes, as different models have varying strengths in terms of language comprehension, context understanding, and the ability to generate accurate medical advice.

ChatGPT 4.0 scored the highest, followed by ChatGPT 3.5 and Bard. ChatGPT 4.0 scored 167/193 (86.5%) with a scaled score of 730.

Bard consistently scored lower in raw scores compared to ChatGPT 3.5 or ChatGPT 4.0.

2. Can LLMs Pass the FM Board Exam Themselves?

A natural question arises: can LLMs actually pass the FM board exams? While some LLMs performed remarkably well, achieving scores that might pass certain sections of the exam, they still struggled with nuanced questions requiring deeper clinical reasoning. This suggests that while LLMs are powerful tools, they are not yet substitutes for comprehensive medical knowledge and experience. However, their performance does highlight their potential as study aids.

According to the Bayesian score predictor, assuming the LLM was performing at the level of a PGY-3 resident, ChatGPT 4.0 has a 100% chance of passing the family medicine board exam.

3. How Do LLMs Compare to Traditional Study Methods?

As a supplementary resource, AI can answer the practice question and then, to eliminate the effect of hallucinations, students and residents should cross reference the results with the answer key to make sure it is the proper answer. Once the answer is known to be correct, the AI’s reasoning and background information can likely be useful to review material previously learned. Moreover, AI can reword or rework its response into a table, bullet points, or other formats per user preference. As AI improves, this study, using a different ITE exam, can be replicated to gauge the progress of LLMs and their ability to aid in resident and student revision of practice exams.

4. What Are the Limitations of Using LLMs for Board Exam Preparation?

While LLMs offer several advantages, they are not without limitations. Our article discusses some of the key challenges associated with relying on LLMs for board exam prep. These include the potential for misinformation, the risk of over-reliance on AI-generated content, and the current inability of LLMs to engage in higher-order clinical reasoning.

The high error rate limits the extrapolation of AI’s performance as an effective teaching tool. Future studies should investigate the quality and accuracy of AI’s explanations in medical education.

ChatGPT 3.5 does not accept images. Therefore, questions with associated figures were answered using text-only information for all three LLMs, which may have affected their performance.

Understanding these limitations is crucial for educators and learners to ensure that LLMs are integrated effectively and responsibly into study routines.

5. What Future Developments Could Improve the Use of LLMs in Medical Education?

Looking ahead, the potential for LLMs in medical education is vast, but there are several areas for improvement. Our article discusses potential advancements, such as the development of LLMs specifically tailored for medical education and board exams, improved accuracy in medical contexts, and better integration with existing educational tools. As these technologies evolve, we anticipate that LLMs will become even more valuable in helping physicians prepare for board exams and other professional milestones.

While AI can be used as a supplementary resource for residents, all users need to be aware of LLMs’ limitations and to use them with caution to avoid learning false information.

As always - get in touch and let me know your thoughts!

Thank you for joining us on this adventure. Stay tuned for more AI insights, best practices, and more future editions of AI+MedEd.

For education and innovation,

Karim

Share this with someone - have them sign up here.