Episode 64

Gumbo, Jazz, and Gator

Educators in Medicine,

In this newsletter, we continue our journey through the fundamentals of AI, its applications in medicine, and its transformative role in faculty development and education. Let’s dive into learning.

I’m writing this from a small table in New Orleans, after a plate of fried chicken, sweet tea and alligator. I’ve been here for STFM with our AI Taskforce and a bunch of friends from across the country — the kind of friends you only see at conferences but who somehow pick up right where you left off last year.

Thursday night was gumbo, Friday was fried dough and sugar, and Saturday was jambalaya. Every block was jazz — the kind that pours out of an open door.

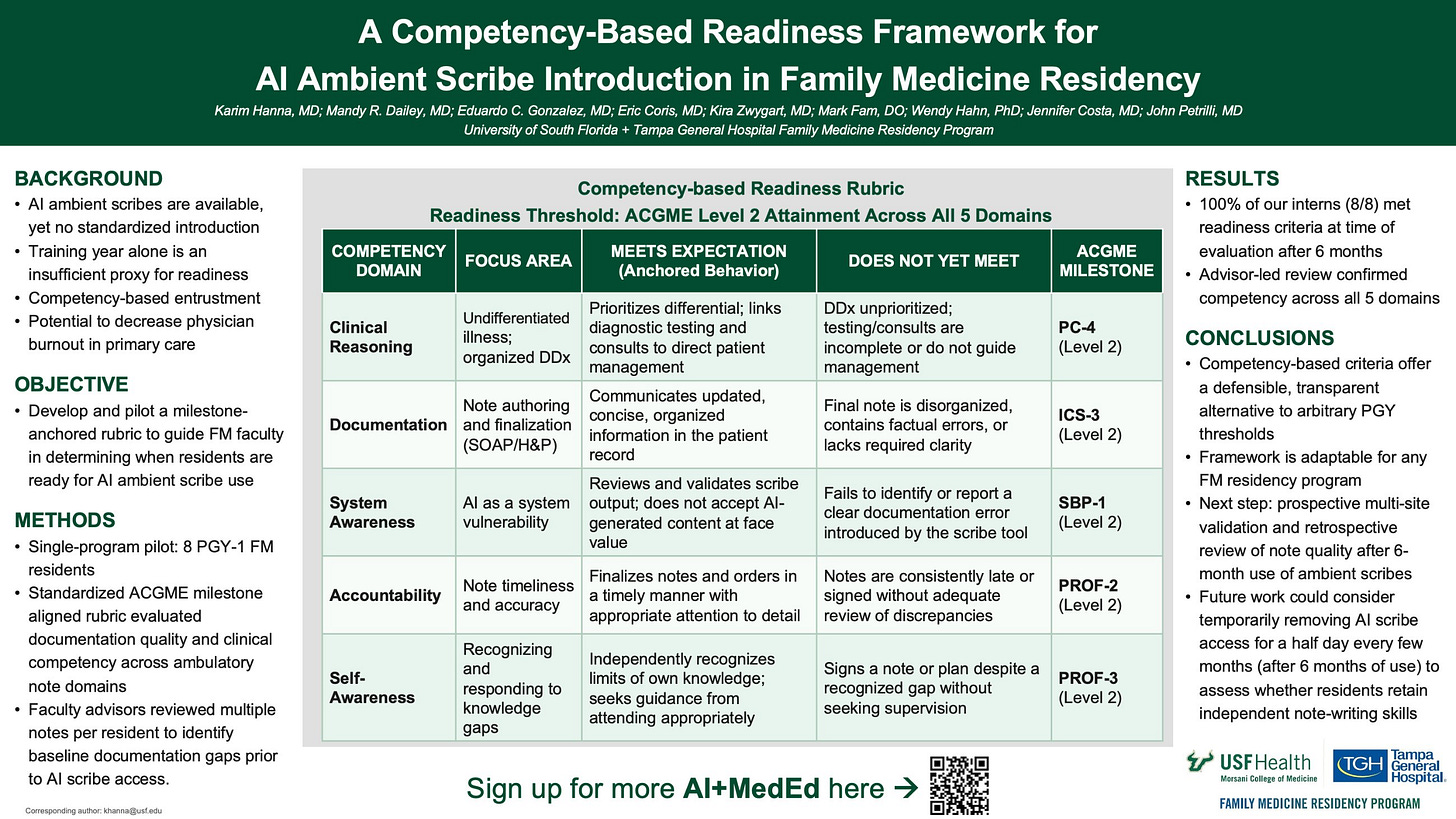

Our STFM 2026 poster, so feel free to download, share, or steal. Just promise to give us a holler and showcase where you put it and what you do with it!

We brought a project our team has been chewing on for a year: a competency-based readiness framework for introducing AI ambient scribes in family medicine residency. This rubric, five domains, ACGME milestone–anchored, with a clear “meets / does not yet meet” threshold.

I expected a couple passing nods, but many expressed something like “We’ve been waiting for someone to just give us a starting point.”

That, to me, is why we do these academic pursuits. The sets of live music just add to that.

🎷 The Project

Our rubric is anchored to existing ACGME milestones — Clinical Reasoning (PC-4), Documentation (ICS-3), System Awareness (SBP-1), Accountability (PROF-2), and Self-Awareness (PROF-3). The threshold for being ready to use an AI ambient scribe is Level 2 attainment across all five domains.

We piloted it with our 8 PGY-1s. All eight met readiness criteria after six months of training. Faculty advisors did the review of a note they selected

Why did it land? I think because it does three things programs have been thinking to do (timeliness is key):

It names the actual skills the resident needs before AI offloads any of them — clinical reasoning, system awareness of AI as a vulnerability, accountability for what gets signed.

It uses the milestones we already have, instead of inventing a parallel evaluation universe nobody has time to maintain.

It gives faculty a defensible answer to “why does this resident get the scribe and that one doesn’t yet?” The answer is no longer “vibes” or “PGY year.” The answer is the rubric. We intentionally went away from chronology for that reason.

Most program directors I talked to weren’t worried about the technology. They were worried about defending the rollout to their faculty, without their residents. The unfairness was a concern. Many places just do it without a system. I’m happy to share an option for adoption (sounds cool in my head).

🎺 How to Use Our Rubric — and How to Make Your Own

If you want to use our rubric directly: the poster above is yours. Share it with your faculty, drop the five domains into your evaluation platform, and run it on your next class of interns at around the six-month mark. Tell us what works and what doesnt. We genuinely want to know — that’s a potential multi-site validation if anyone is interested!

A lighthearted closing thought. Innovation, like jazz, is mostly improvisation around a clear melody. The rubric is the melody. Your program is the improvisation.

Jazz sometimes has songs in 5/4 time, and our ears find them “pleasantly out of sync” as I heard a speaker say at the conference. That feels like our job in medical education right now.

Start playing. Adjust as you go.

💌 As always, thanks for reading. Get in touch and let me know your thoughts!

Thank you for joining us on this adventure. Stay tuned for more AI insights, best practices, and more future editions of AI+MedEd.

For education and innovation,

Karim

Share this with someone - have them sign up here.